- January 25, 2023

- Posted by: sadmin

- Categories: Customers

Zero-shot learning: a technique to crack diverse unseen classes with unannotated data

What is Zero-shot learning?

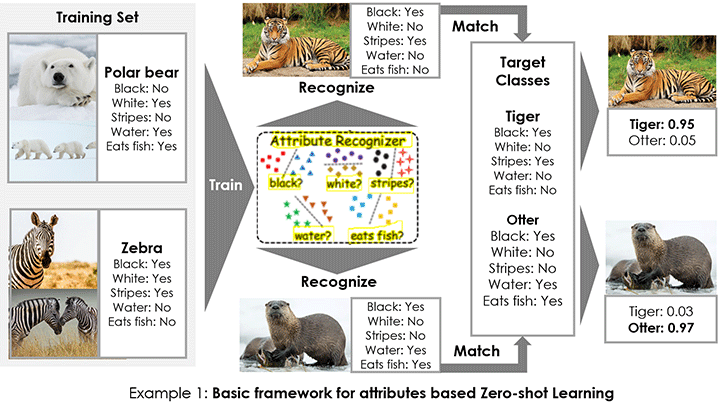

Zero-shot Learning is a transfer learning paradigm to address the problem where training data is not available for some classes.

Zero-shot learning aims to train a model that can classify text/objects of unseen classes (classes unavailable during training) via transferring knowledge obtained from other seen classes during training with the help of visual and semantic information. The visual and semantic information can be manually defined as attribute vectors, automatically extracted word vectors, context-based embedding, or their combinations.

Zero-shot learning uses Embedding-based methods and relation-based methods, which aim to build an embedding space for visual and semantic attributes to embed the names of seen and unseen classes in high-dimensional vectors to bridge the gap between the seen and unseen classes.

The most important zero-shot learning methods are Attribute Label Embedding (ALE), Deep Visual Semantic Embedding (DEVISE), Structured Joint Embedding (SJE), Latent Embeddings (LATEM) and Cross-Modal Transfer (CMT), Semantic Similarity Embedding (SSE), Convex Combination of Semantic Embeddings (CONSE), Synthesized Classifiers (SYNC), Hypergraph-based Attribute Predictor (HAP), Direct Attribute Prediction (DAP) and Indirect Attribute Prediction (IAP).

How different is Zero-shot learning from the conventional approach?

Zero-shot learning technique considers real-life challenges,

- Does not pre-define the size of the label space.

- Does not presume the availability of labelled data.

- It neither estimates how many and what classes will be handled nor has annotated data to train class-specific parameters.

- Zero-shot learning understands the problem encoded in the aspects and meaning of the labels. Conventional supervised classifiers fail in this aspect since label names are converted into indices – this means the conventional classifiers do not really understand the labels.

For example, given a text input, it provides, topic, emotion, and situation aspects even when such a topic, emotion and situation-related annotated data was never used during training.

Applications of Zero-shot learning

- In the field of Computer vision, for Image classification/visual recognition and object detection (Given an input image, the trained detector should recognize and localize every object belonging to the unseen classes), examples, for scene classification, face verification, action recognition, and surveillance, sign language recognition (leverage models learned over the seen sign class examples to recognize the instances of unseen signs).

- In the field of NLI, for text classification (predicting a class of the text that wasn’t seen by the model during training), topic detection, emotion detection, intent detection (train model to detect user intents where no labelled utterances are currently available), language detection and translation (translating between language pairs on which a Neural Machine Translation (NMT) system has never been trained), Semantic Utterance Classification (predicting the semantic domain of utterances without having seen examples of any of such domains in the training set).

- Sequence labelling (structured prediction task where systems need to assign the correct label to every token in the input sequence), Common-sense question answering (train model to answer questions regarding common sense knowledge that everyone knows), relation extraction via reading comprehension (predict target relation not previously seen or observed during training).

References for further reading

- A Review of Generalized Zero-Shot Learning Methods

- Benchmarking Zero-shot Text Classification: Datasets, Evaluation and Entailment Approach

- Zero-Shot Learning with Attribute Selection∗

- Survey Of zero shot detection: Methods and applications

- Zero-Shot Learning – the Good, the Bad and the Ugly

- Learning Hypergraph-regularized Attribute Predictors